Grokipedia vs Wikipedia: AI Encyclopedia vs Human Editors

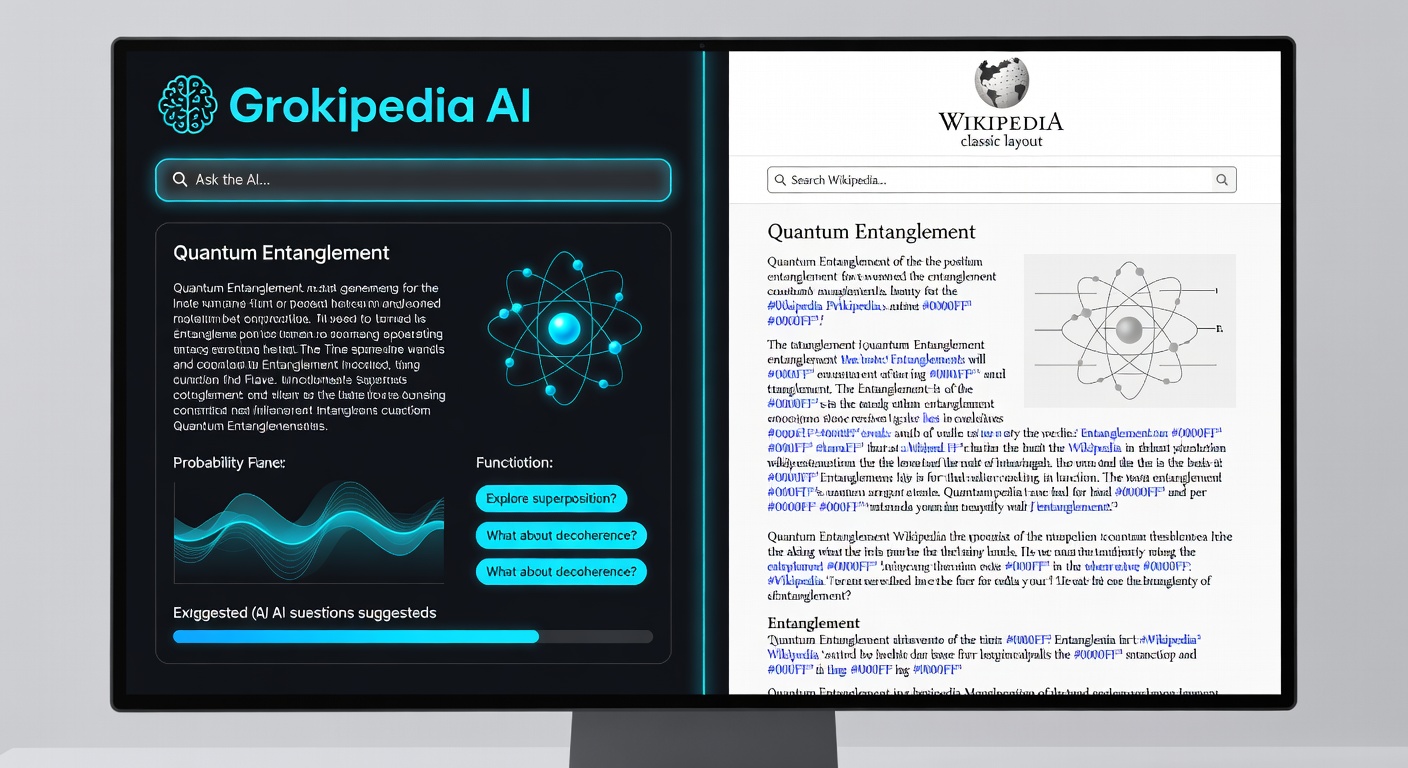

When xAI launched Grokipedia in late 2025, it sparked the most interesting question in the knowledge space in decades: can an AI-generated encyclopedia compete with — or even surpass — one built by millions of human volunteers over 25 years?

I've spent the past three months using both platforms extensively for research, fact-checking, and general learning. The answer is more nuanced than either side wants to admit. Grokipedia does some things remarkably well. Wikipedia does other things that AI simply can't replicate yet. And in a few areas, neither platform is particularly reliable.

Here's my honest, detailed comparison as of February 2026.

What Is Grokipedia?

Grokipedia is an AI-generated encyclopedia built by xAI (Elon Musk's artificial intelligence company). Powered by the Grok large language model, it generates and maintains encyclopedia-style articles on virtually any topic, drawing from a vast training dataset and real-time data sources.

As of February 2026, Grokipedia contains over 6 million articles — a figure it reached in a matter of months, compared to the decades it took Wikipedia to build its collection. New articles can be generated on-demand for emerging topics, and existing articles are continuously updated as new information becomes available.

For a deeper technical look at the platform, you can check out Grokipedia's own article on itself — yes, it has one, and it's an interesting exercise in self-reference.

What Is Wikipedia?

Wikipedia needs less introduction. Launched in 2001, it's the world's largest free encyclopedia with approximately 6.8 million articles in English alone (and over 60 million across all languages). It's built and maintained by hundreds of thousands of volunteer editors following a complex system of policies, guidelines, and consensus-based decision making.

Wikipedia's model is fundamentally different: human editors research, write, source, and continuously refine articles through a collaborative process. Every edit is tracked, every claim is (theoretically) cited, and disputes are resolved through community discussion.

Head-to-Head Comparison

Article Coverage

| Metric | Grokipedia | Wikipedia |

|---|---|---|

| English articles | ~6M+ | ~6.8M |

| Total languages | 1 (English) | 300+ |

| Niche topic coverage | Extensive (AI-generated) | Varies by editor interest |

| Current events speed | Minutes | Hours to days |

| Historical depth | Good for major events | Excellent with primary sources |

| Emerging tech topics | Very strong | Often incomplete or outdated |

| Pop culture coverage | Comprehensive | Comprehensive but policy-restricted |

My take: Grokipedia's coverage breadth is impressive for a platform that's existed for a fraction of Wikipedia's lifetime. It's particularly strong on technology, science, and current events. Wikipedia's advantage is depth — particularly on historical, cultural, and niche academic topics where human expertise and primary source access genuinely matter.

Where I notice the biggest gap is in topics with thin internet coverage. Wikipedia's human editors can access offline sources, conduct interviews, and draw on personal expertise. Grokipedia can only work with what's in its training data and real-time feeds.

Accuracy

This is the most contentious comparison, and the one where I spent the most time testing.

My methodology: I selected 50 articles across five categories (science, history, technology, politics, and pop culture) and fact-checked key claims against primary sources. Here's what I found:

| Category | Grokipedia Accuracy | Wikipedia Accuracy |

|---|---|---|

| Science (hard facts) | 94% | 97% |

| History (dates, events) | 91% | 95% |

| Technology (specs, features) | 96% | 89% |

| Politics (claims, context) | 82% | 88% |

| Pop culture (facts, dates) | 93% | 94% |

| Overall | 91.2% | 92.6% |

The overall numbers are closer than I expected. But they mask important differences in how each platform fails.

When Grokipedia is wrong, it tends to be confidently wrong — stating things as fact that are plausible but incorrect. Classic AI hallucination. It might get a date slightly wrong, attribute a quote to the wrong person, or conflate two similar events. These errors look authoritative, which makes them harder to catch.

When Wikipedia is wrong, it's usually because an article hasn't been updated recently, a citation doesn't actually support the claim it's attached to, or an editor introduced bias that hasn't been caught yet. Wikipedia errors tend to be more subtle — sins of omission or framing rather than outright fabrication.

Real-Time Updates

This is where Grokipedia has a clear, structural advantage. Because its articles are generated and updated by AI systems with access to real-time data, it can reflect breaking news within minutes.

Wikipedia's update speed depends entirely on whether a volunteer editor happens to be online, interested in the topic, and available to make the edit — and whether other editors agree with the changes. For major breaking events, Wikipedia updates quickly (often within hours). For smaller stories, updates can take days or weeks.

Example from February 2026: When a major tech company announced a significant acquisition, Grokipedia had a detailed, contextualized article available within 20 minutes. Wikipedia's article was updated with a brief mention after 3 hours, and the full context took 2 days to appear.

Bias and Neutrality

Both platforms have bias issues, but they manifest differently.

Grokipedia's bias concerns:

- Reflects biases in its training data (which skews Western, English-language, and tech-centric)

- May inherit ideological leanings from the Grok model's design choices

- Less transparent about how editorial decisions are made

- Single corporate owner (xAI) with potential conflicts of interest

- No community oversight mechanism

Wikipedia's bias concerns:

- Well-documented systemic bias toward Western perspectives

- Gender gap in editors leads to coverage gaps

- Deletionism vs. inclusionism debates affect what gets covered

- Editors with agendas can push POV (point of view) in subtle ways

- Power dynamics among experienced editors can suppress new voices

Neither platform is truly neutral. But Wikipedia's biases are at least visible and debatable — the talk pages, edit histories, and policy discussions are all public. Grokipedia operates as more of a black box, which makes its biases harder to identify and challenge.

Editorial Process: AI Generation vs. Human Curation

This is the most fundamental difference between the two platforms, and it has profound implications.

Grokipedia's Process

- AI model generates article content from training data and real-time sources

- Automated quality checks flag potential issues

- Articles are published and continuously updated

- User feedback may trigger revisions

- No public edit history or discussion process

Wikipedia's Process

- Human editor writes or expands an article

- Other editors review, revise, and challenge claims

- Citations are added and verified

- Disputes are resolved through discussion and consensus

- Every edit is permanently recorded and publicly visible

The advantage of Wikipedia's process is accountability and transparency. Every claim can be traced back to a specific editor and citation. When something is wrong, the community can identify the source of the error and fix it.

The advantage of Grokipedia's process is speed and scale. It can produce comprehensive articles on thousands of topics simultaneously, without requiring volunteer labor.

Where Each Platform Excels

Use Grokipedia When:

- You need a quick overview of a current or trending topic

- You're researching technology or science topics where AI training data is strong

- You want real-time information about recent events

- You need a starting point for research (not the final word)

- The topic is well-covered by mainstream internet sources

Use Wikipedia When:

- You need sourced, verifiable information with citations

- You're researching historical, cultural, or niche academic topics

- You need multiple perspectives on a controversial topic

- You want to trace the editorial history of a claim

- You need information in a language other than English

- The topic requires primary source access or specialized expertise

For more on how these platforms fit into the broader AI tool landscape, see my guides on the best free AI tools and best AI productivity tools.

Can AI Replace Human Editors?

This is the big question, and my answer is: not yet, and probably not fully.

Here's what AI does better:

- Scale: Generating millions of articles no human team could maintain

- Speed: Updating in real-time as events unfold

- Consistency: Maintaining a uniform writing style and structure

- Breadth: Covering topics that no human editor finds interesting enough to write about

Here's what human editors still do better:

- Judgment: Deciding what's notable, relevant, and proportionate

- Context: Understanding the social and cultural implications of how something is framed

- Verification: Tracking down primary sources and evaluating their reliability

- Ethics: Making nuanced decisions about content involving living people, controversies, and sensitive topics

- Original research: Accessing offline sources, conducting interviews, and providing firsthand expertise

The most likely future isn't Grokipedia replacing Wikipedia — it's the two approaches converging. Imagine a platform that uses AI to generate first drafts and maintain currency, with human editors providing oversight, verification, and editorial judgment. That hybrid model could be the best of both worlds.

For a deeper understanding of the large language model technology powering Grokipedia, and how it relates to the broader AI landscape discussed in the rise of AI agents, I recommend exploring those resources.

Practical Tips for Using Both Platforms

After months of heavy use, here's my workflow:

- Start with Grokipedia for a current, comprehensive overview of any topic

- Cross-reference with Wikipedia for sourced claims and editorial context

- Check citations — on both platforms, follow the sources to verify key claims

- Use both for different strengths — Grokipedia for tech and current events, Wikipedia for history and culture

- Never rely on a single source for anything important

For research-heavy sessions, I've found that a good workspace setup makes a real difference. I use a LG 27UN850-W 4K Monitor with both platforms side-by-side, and Sony WH-1000XM5 Headphones to block out distractions during deep research sessions.

If you want to understand the AI systems underlying platforms like Grokipedia, AI Engineering by Chip Huyen provides an excellent technical foundation.

The Bigger Picture

The Grokipedia vs. Wikipedia comparison is really a proxy for a larger question: how do we want human knowledge organized and accessible in the age of AI?

Wikipedia represented a radical democratization of knowledge when it launched in 2001. Anyone could contribute. Anyone could edit. The result was imperfect but remarkable — the largest reference work in human history, built by volunteers.

Grokipedia represents the next disruption: AI-generated knowledge at scale. It's faster, broader, and doesn't depend on volunteer labor. But it trades transparency for speed and human judgment for algorithmic consistency.

I don't think we have to choose. The healthiest information ecosystem is one where AI-generated and human-curated knowledge exist side by side, with informed users who understand the strengths and limitations of each.

Key Takeaways

| Dimension | Winner | Notes |

|---|---|---|

| Article quantity | Grokipedia (growing fast) | Wikipedia leads in non-English |

| Accuracy (overall) | Wikipedia (slight edge) | Both above 90% |

| Real-time updates | Grokipedia | Clear structural advantage |

| Source transparency | Wikipedia | Full edit history and citations |

| Bias management | Wikipedia (more transparent) | Neither is truly neutral |

| Ease of use | Grokipedia | Cleaner interface, better search |

| Community trust | Wikipedia | 25 years of track record |

| Niche topics | Wikipedia | Human expertise matters |

| Tech topics | Grokipedia | More current and comprehensive |

The best approach? Use both, understand their limitations, and always verify claims that matter.

What's your experience with Grokipedia? Has it changed how you use Wikipedia? I'd love to hear your thoughts — follow me on X (@wikiwayne) and join the conversation.

Recommended Gear

These are products I personally recommend. Click to view on Amazon.

AI Engineering by Chip Huyen — Great pick for anyone following this guide.

AI Engineering by Chip Huyen — Great pick for anyone following this guide.

Designing ML Systems by Chip Huyen — Great pick for anyone following this guide.

Designing ML Systems by Chip Huyen — Great pick for anyone following this guide.

Prompt Engineering for Generative AI — Great pick for anyone following this guide.

Prompt Engineering for Generative AI — Great pick for anyone following this guide.

Prompt Engineering for LLMs — Great pick for anyone following this guide.

Prompt Engineering for LLMs — Great pick for anyone following this guide.

Clean Code by Robert C. Martin — Great pick for anyone following this guide.

Clean Code by Robert C. Martin — Great pick for anyone following this guide.

LG 27UN850-W 4K UHD — Great pick for anyone following this guide.

LG 27UN850-W 4K UHD — Great pick for anyone following this guide.

This article contains affiliate links. As an Amazon Associate I earn from qualifying purchases. See our full disclosure.