The State of AI in February 2026

The AI model landscape has shifted dramatically in the past year. Anthropic released Claude Opus 4.6 with significantly improved reasoning and agentic capabilities. OpenAI launched GPT-5.2 with a focus on multimodal understanding and speed. Google pushed Gemini 3.1 with deep integration across its ecosystem and an enormous context window.

I use all three models daily -- Claude for coding and analysis, ChatGPT for brainstorming and multimodal tasks, and Gemini for research that benefits from Google's data. This comparison is based on months of real-world usage, not just benchmarks. Though I will share those too.

If you are deciding which AI assistant to invest your time and money in, this guide will help you make an informed choice.

Quick Comparison: At a Glance

| Feature | Claude Opus 4.6 | GPT-5.2 | Gemini 3.1 |

|---|---|---|---|

| Company | Anthropic | OpenAI | |

| Release | Jan 2026 | Dec 2025 | Feb 2026 |

| Context Window | 200K tokens | 256K tokens | 2M tokens |

| Multimodal | Text, images, PDFs | Text, images, audio, video | Text, images, audio, video |

| Code Generation | Excellent | Excellent | Very Good |

| Reasoning | Excellent | Very Good | Very Good |

| Creative Writing | Excellent | Very Good | Good |

| Speed (tokens/sec) | ~85 | ~110 | ~95 |

| API Price (1M in/out) | $15/$75 | $12/$60 | $10/$30 |

| Free Tier | Yes (limited) | Yes (limited) | Yes (generous) |

| Pro Plan | $20/mo | $20/mo | $20/mo |

| Max Plan | $200/mo | $200/mo | N/A |

Reasoning and Analysis

This is where Claude has consistently held an edge, and Opus 4.6 extends that lead. Anthropic has focused heavily on chain-of-thought reasoning and the model's ability to break down complex problems.

My Test: Multi-Step Business Analysis

I gave all three models the same prompt: analyze a fictional company's financials, identify three strategic risks, and recommend actions with estimated ROI for each.

Claude Opus 4.6: Structured its response with clear headers, identified non-obvious risks like supplier concentration, and provided specific ROI calculations with stated assumptions. It also flagged uncertainty in its estimates, which I appreciate.

GPT-5.2: Provided solid analysis with good structure. The risks identified were valid but more conventional. ROI estimates were present but less detailed on assumptions.

Gemini 3.1: Good analysis overall, but tended toward broader, less specific recommendations. Excelled when the prompt explicitly asked for data-backed answers, likely benefiting from Google's data training.

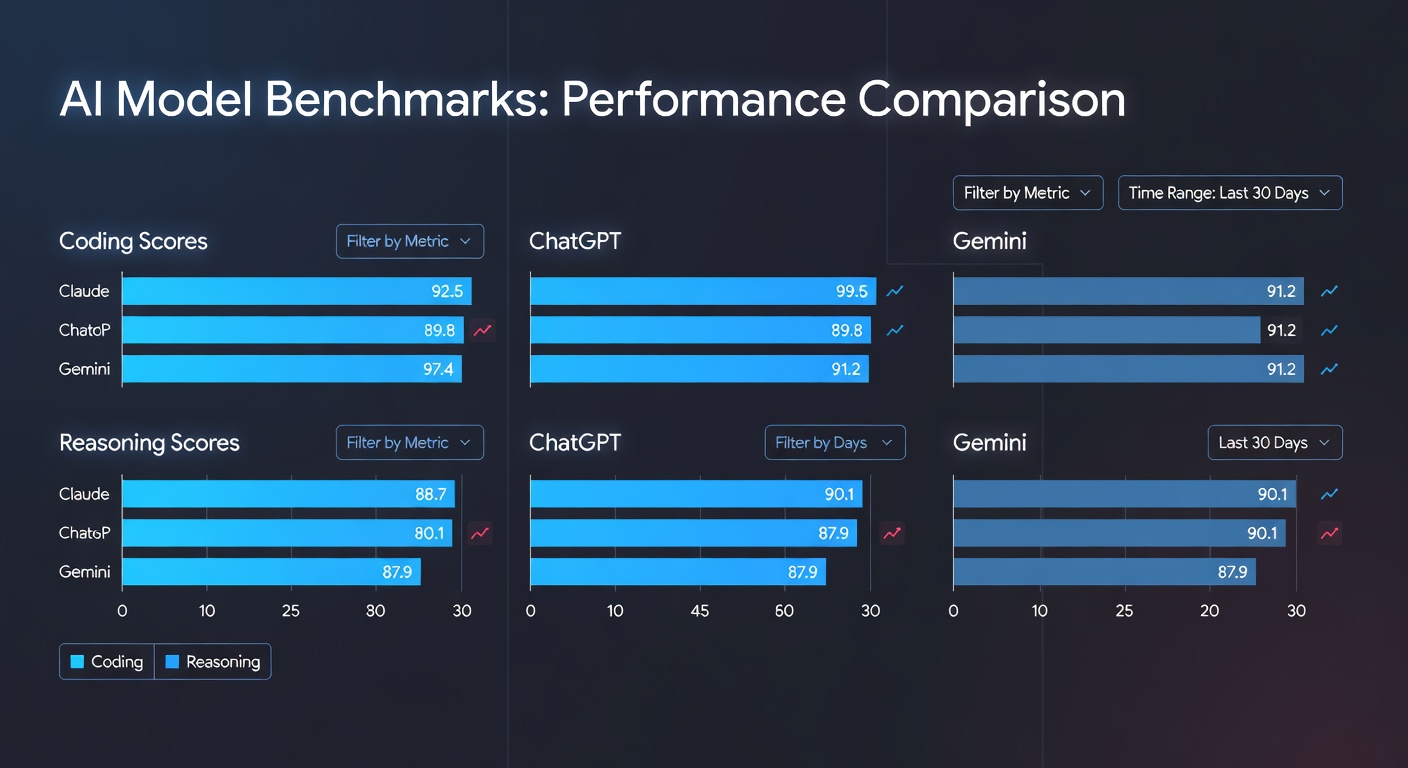

Benchmark Scores (Reasoning)

| Benchmark | Claude Opus 4.6 | GPT-5.2 | Gemini 3.1 |

|---|---|---|---|

| MMLU-Pro | 89.2 | 87.8 | 86.9 |

| GPQA Diamond | 72.1 | 69.4 | 67.8 |

| ARC-Challenge | 96.8 | 95.2 | 94.7 |

| BigBench Hard | 91.5 | 89.7 | 88.2 |

Winner: Claude Opus 4.6 -- consistently strongest on complex reasoning tasks.

Coding Capabilities

All three models are genuinely useful for coding in 2026, but they have different strengths.

My Test: Build a REST API

I asked each model to build a complete REST API with authentication, rate limiting, and database integration using Node.js and PostgreSQL.

Claude Opus 4.6: Generated the most production-ready code out of the box. Included proper error handling, input validation, and security best practices without being asked. The code structure followed clean patterns and the model proactively added helpful comments explaining design decisions.

GPT-5.2: Also produced excellent code. Slightly faster at generating the initial output. The code was clean and functional, though I had to prompt for security hardening. GPT-5.2 excelled at understanding vague requirements and making sensible assumptions.

Gemini 3.1: Produced working code but required more iteration. The initial output sometimes mixed different framework conventions. However, when given existing code to work with, Gemini's enormous context window let it understand large codebases better than the others.

Coding Benchmark Scores

| Benchmark | Claude Opus 4.6 | GPT-5.2 | Gemini 3.1 |

|---|---|---|---|

| HumanEval+ | 93.2 | 92.8 | 89.4 |

| SWE-Bench Verified | 64.8 | 61.2 | 57.5 |

| MBPP+ | 88.7 | 87.9 | 85.1 |

| CodeContests | 34.2 | 32.8 | 30.1 |

Winner: Claude Opus 4.6 -- especially for complex, multi-file coding tasks and agentic coding workflows.

For those serious about AI-assisted coding, our best AI coding assistants guide covers the full tool landscape.

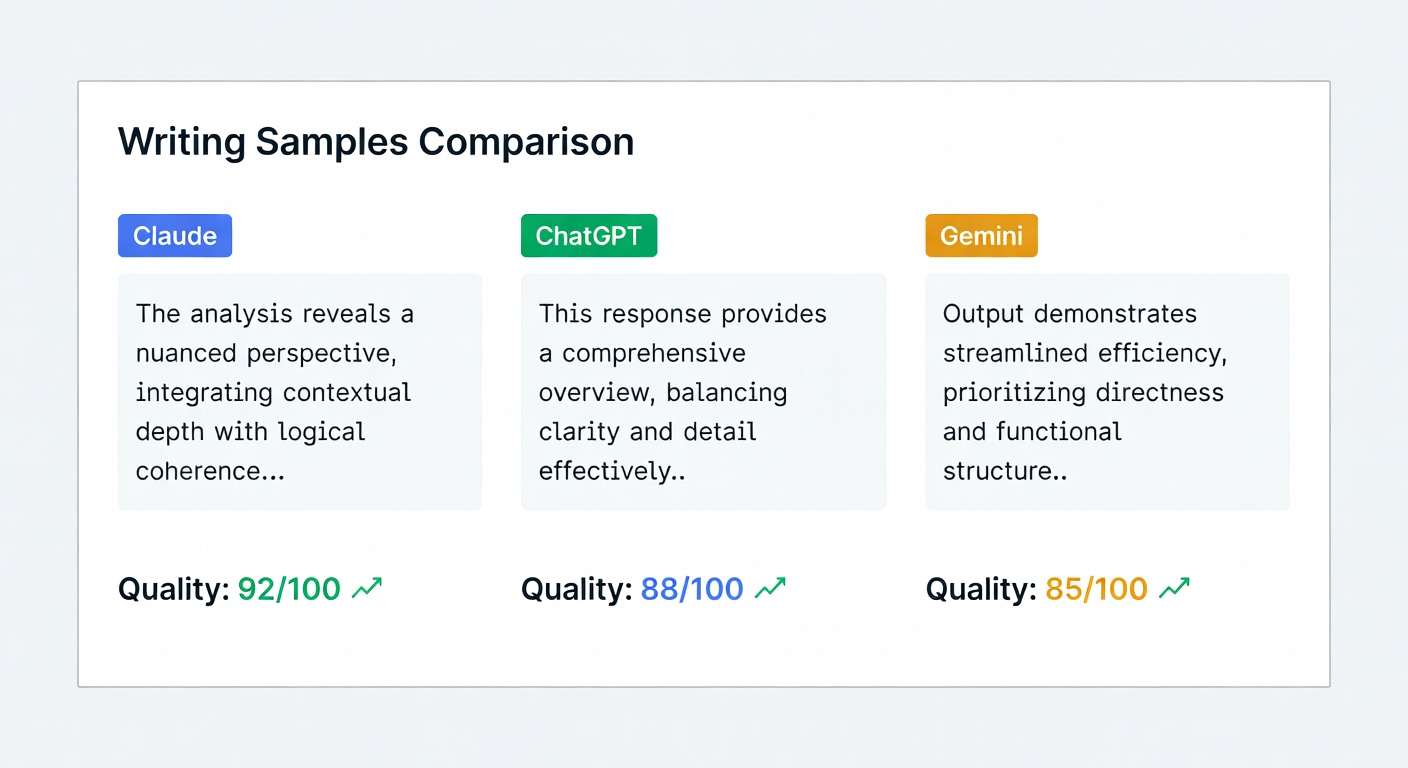

Creative Writing

This category is more subjective, but I have run enough tests to have a clear picture.

My Test: Write a Short Story Opening

All three models received the same creative writing prompt: write the opening 500 words of a literary fiction story about a retired astronaut returning to their hometown.

Claude Opus 4.6: The writing had the most distinctive voice. Subtle, literary, and willing to leave things unsaid. Sentences had varied rhythm and the imagery was specific rather than generic. Claude's writing felt the most "human" to me.

GPT-5.2: Competent and engaging. The prose was polished and accessible. Tended toward more conventional storytelling structures, which is not a bad thing -- it is just a different style. GPT-5.2 is excellent at matching a specified tone or style when prompted.

Gemini 3.1: The weakest in creative writing. The prose was functional but lacked the nuance of the other two. Descriptions tended toward the obvious. However, Gemini has improved significantly from previous versions.

Winner: Claude Opus 4.6 -- the best at nuanced, literary writing. GPT-5.2 is close second for more commercial or accessible styles.

Multimodal Capabilities

This is where the comparison gets interesting, because each model has taken a different approach.

Image Understanding

All three models can analyze images, but with different strengths:

- Claude Opus 4.6: Excellent at detailed image analysis and document understanding. Great with charts, diagrams, and technical imagery. Cannot generate images natively.

- GPT-5.2: Strong image analysis plus native DALL-E 4 image generation. The best all-in-one for image workflows.

- Gemini 3.1: Very strong image analysis, particularly for real-world photos. Imagen 4 integration for generation. Best at understanding images in context with large amounts of text.

Audio and Video

- Claude: No native audio/video processing yet

- GPT-5.2: Can process audio natively. Advanced Voice Mode is very capable. Video understanding via frame analysis.

- Gemini 3.1: Native audio and video understanding. Can process full YouTube videos. Strongest multimodal capabilities overall.

Winner: Gemini 3.1 for multimodal breadth, GPT-5.2 for image generation workflows.

Context Window and Long-Form Tasks

This is Gemini's clear advantage:

| Model | Context Window | Effective Use |

|---|---|---|

| Claude Opus 4.6 | 200K tokens | ~150K reliably |

| GPT-5.2 | 256K tokens | ~200K reliably |

| Gemini 3.1 | 2M tokens | ~1.5M reliably |

Gemini's 2 million token context window is a game changer for certain workflows. If you need to analyze an entire codebase, a long document collection, or hours of meeting transcripts, Gemini handles it in a single pass where the others require chunking strategies.

That said, for most everyday tasks, 200K tokens is more than enough. I rarely need more than 100K tokens in a single conversation.

Tool Use and Agentic Capabilities

This category has become critical in 2026 as AI agents move from novelty to daily productivity tools.

| Capability | Claude Opus 4.6 | GPT-5.2 | Gemini 3.1 |

|---|---|---|---|

| Parallel tool calls | Yes | Yes | Limited |

| Tool error recovery | Excellent | Good | Good |

| Multi-step planning | Excellent | Very Good | Good |

| Computer use | Yes | No | No |

| Code execution | Yes (Claude Code) | Yes (Code Interpreter) | Yes (Gemini Code) |

Claude's agentic capabilities are the strongest. The model's ability to plan multi-step workflows, use tools in parallel, and recover gracefully from errors makes it the top choice for AI agent platforms like OpenClaw.

Winner: Claude Opus 4.6 for agentic and tool-use tasks.

Safety and Alignment

All three companies have invested heavily in safety, but their approaches differ:

- Anthropic (Claude): Constitutional AI approach. Claude is the most careful about harmful content and tends toward refusal when uncertain. This can occasionally be frustrating but rarely produces genuinely problematic output.

- OpenAI (ChatGPT): RLHF-focused approach. GPT-5.2 is well-calibrated and has fewer unnecessary refusals than previous versions.

- Google (Gemini): Combination of approaches. Gemini is generally conservative, similar to Claude, and particularly careful around topics related to public figures.

For an in-depth look at AI safety, the ongoing debate shapes how all of these models evolve.

Pricing Deep Dive

Free Tiers

| Feature | Claude Free | ChatGPT Free | Gemini Free |

|---|---|---|---|

| Model Access | Sonnet 4.5 | GPT-5.2-mini | Gemini 3.1 Flash |

| Daily Messages | ~30 | ~50 | Unlimited |

| File Upload | Yes | Yes | Yes |

| Image Gen | No | Limited | Limited |

| Code Execution | No | No | Yes |

Pro Plans ($20/month)

| Feature | Claude Pro | ChatGPT Plus | Gemini Advanced |

|---|---|---|---|

| Model Access | Opus 4.6 + Sonnet | GPT-5.2 | Gemini 3.1 Pro |

| Usage Limit | 5x free | 5x free | Generous |

| Image Gen | No | DALL-E 4 | Imagen 4 |

| Priority Access | Yes | Yes | Yes |

| Extra Features | Projects, Artifacts | Custom GPTs, Canvas | Google integration |

API Pricing (per 1M tokens)

| Model | Input | Output |

|---|---|---|

| Claude Opus 4.6 | $15.00 | $75.00 |

| Claude Sonnet 4.5 | $3.00 | $15.00 |

| Claude Haiku 4 | $0.25 | $1.25 |

| GPT-5.2 | $12.00 | $60.00 |

| GPT-5.2-mini | $0.50 | $2.00 |

| Gemini 3.1 Pro | $10.00 | $30.00 |

| Gemini 3.1 Flash | $0.15 | $0.60 |

Best Value: Gemini 3.1 Pro offers the best performance-per-dollar at the API level. Claude Haiku 4 and Gemini Flash are the budget champions.

My Recommendations

Choose Claude If You:

- Do a lot of coding and want the best code quality

- Need strong reasoning for analysis and research

- Use AI agents or agentic workflows

- Value careful, accurate responses

- Write content and want natural-sounding assistance

Choose ChatGPT If You:

- Need multimodal workflows (text + images + audio)

- Want the largest ecosystem of plugins and GPTs

- Prefer speed and low latency

- Need image generation built into your workflow

- Want the most well-known interface

Choose Gemini If You:

- Work with very long documents or codebases

- Are deep in the Google ecosystem

- Need video or audio understanding

- Want the most generous free tier

- Need the best price-to-performance ratio

Or Use All Three

Honestly, this is what I do. I have Claude Pro for coding and writing, ChatGPT Plus for image-related workflows, and Gemini Advanced for research on large document sets. The total is $60/month, and each tool earns its keep.

If you want to go deeper on mastering prompts across all three platforms, check out Prompt Engineering for Generative AI -- it covers techniques that work regardless of which model you use.

For a practical guide to getting started with Claude specifically, see our complete Claude AI beginner's guide.

The Bottom Line

There is no single "best" AI model in 2026. The competition between Anthropic, OpenAI, and Google has been phenomenal for users -- all three models are remarkably capable, and each pushes the others to improve.

If I had to pick one and only one, I would choose Claude Opus 4.6 for its reasoning depth, coding excellence, and the quality of its written output. But I would feel the loss of GPT-5.2's multimodal strengths and Gemini's massive context window.

The real answer is: try all three on your actual tasks and see which one clicks for you. The free tiers are generous enough for meaningful evaluation.

Which AI model do you rely on most? Join the debate on X (@wikiwayne) -- I read every reply and feature the best takes in my newsletter.

Recommended Gear

These are products I personally recommend. Click to view on Amazon.

AI Engineering by Chip Huyen — Great pick for anyone following this guide.

AI Engineering by Chip Huyen — Great pick for anyone following this guide.

Designing ML Systems by Chip Huyen — Great pick for anyone following this guide.

Designing ML Systems by Chip Huyen — Great pick for anyone following this guide.

Prompt Engineering for Generative AI — Great pick for anyone following this guide.

Prompt Engineering for Generative AI — Great pick for anyone following this guide.

Prompt Engineering for LLMs — Great pick for anyone following this guide.

Prompt Engineering for LLMs — Great pick for anyone following this guide.

Logitech MX Keys S Wireless — Great pick for anyone following this guide.

Logitech MX Keys S Wireless — Great pick for anyone following this guide.

ASUS ProArt PA279CRV 27" 4K — Great pick for anyone following this guide.

ASUS ProArt PA279CRV 27" 4K — Great pick for anyone following this guide.

This article contains affiliate links. As an Amazon Associate I earn from qualifying purchases. See our full disclosure.